No matter what role you're applying for, you can expect Anthropic interview questions to be tough. To land an offer, your answers will need to be outstanding.

If that sounds daunting, don't worry, we're here to help. We've helped thousands of candidates get jobs at top tech companies, including Anthropic, and we know what sort of questions you can expect in your interview.

Below, we'll go through the most common Anthropic interview questions and show how you can best answer each one.

- Anthropic interview process

- Top 6 Anthropic interview questions and example answers

- More Anthropic interview questions (by role)

- How to prepare for an Anthropic interview

Click here to practice 1-on-1 with expert tech interviewers

1. Anthropic interview process ↑

Before diving into interview questions, it helps to understand the interview stages you'll face.

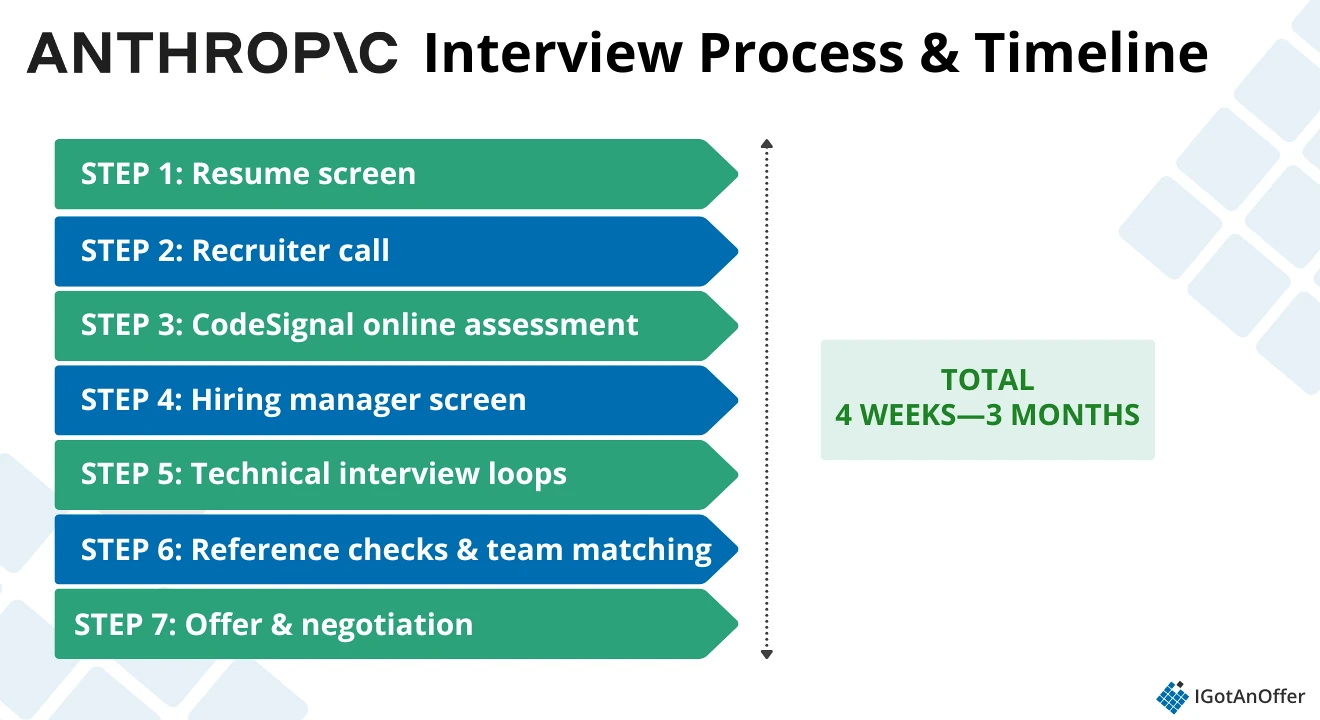

The Anthropic interview process typically includes:

- Resume screen

- Recruiter call

- CodeSignal online assessment (90 minutes)

- Hiring manager screen (1 hour)

- Technical interview loops (4–5 rounds, ~55 minutes each)

- Reference checks and team matching (2–4+ weeks)

- Offer and negotiation

The Anthropic interview process typically takes 4 weeks to 3+ months, depending on factors like team matching and reference check timing. Your recruiter may clarify your specific process during the initial call.

1.1 Resume screen

The first step of the Anthropic interview process is the resume screen.

Anthropic's recruiting team reviews your application against the requirements of the open role. If you pass, you'll receive a CodeSignal link from a recruiter within a few days.

This is an extremely competitive step. To help you put together a targeted resume that stands out from the crowd, check out our resume guides:

- Tech resume examples

- Software engineer resume examples

- Senior software engineer resume examples

- Machine learning engineer resume examples

- Engineering manager resume examples

- Product manager resume examples

- Technical program manager resume examples

- Data science resume examples

Use the guides above as your starting point to make a competitive resume for free.

If you’re looking for expert feedback, you can also get help on your resume from one of our tech recruiters, who will cover what achievements to focus on (or ignore), how to fine-tune your bullet points, and more.

1.2 Recruiter call

Your first interaction after your application is accepted will be with a recruiter from Anthropic's talent team. If you applied directly through Anthropic's careers portal, applied through an employee referral, or were contacted directly by a recruiter, this call is your next step.

The recruiter's job is to confirm that your background is relevant to the role and that you're genuinely interested in Anthropic before the company invests significant time in technical assessments. It's usually just a conversation designed to assess cultural fit and clarify expectations on both sides.

During this call, expect questions like:

- “Tell me about yourself.” (Keep it to 2-3 minutes, focusing on relevant experience.)

- “Why Anthropic specifically?” (Reference specific things about their mission, their approach to safety, their values, or specific products.)

- “Why this role?” (Explain what about this specific position appeals to you and why you think you'd be good at it.)

- “Walk me through your resume.” (Be ready to discuss key projects and technical decisions.)

- “What are your career goals? Where do you want to go in 3-5 years?” (Be specific about skills you want to develop or impact you want to have, while demonstrating you've thought about how this role fits into your broader career arc.)

This is also your opportunity to clarify the interview process. Ask your recruiter how many rounds you'll face, what each one focuses on, and timeline expectations.

If you're not from an ML background, this is a good moment to mention it and ask whether that's a concern for the role. Anthropic says it’s not an issue, but it’s still helpful to hear what your recruiter thinks.

If the conversation goes well and the recruiter believes you're a fit, they'll send you a CodeSignal link for an online assessment within a few days, along with instructions and a timeline (typically 5-7 days to complete it).

1.3 Online assessment

If you pass the recruiter screen, you'll receive a link to complete a timed coding assessment on CodeSignal. This applies to most technical roles, including software engineer, research engineer, and research scientist positions.

The online assessment is a single complex problem divided into four progressively harder levels, with 90 minutes to complete it. Each level builds on the previous one, testing whether you can write clean, modular code that absorbs new requirements without collapsing.

Here's how it works:

- Level 1 presents a problem with a set of unit tests. You write code to pass those tests and, once you do, level 2 unlocks.

- Each subsequent level builds on the previous one. You're typically modifying and extending code you already wrote. It tests your ability to adapt code to new requirements.

- You can see all the unit tests for each level.

You should write code that actually solves the problem. Anthropic uses LLMs to detect code that's specifically engineered to pass tests rather than solving the problem genuinely.

During the test, we recommend that you:

- Budget 20–25 minutes per level.

- Test as you go and debug incrementally. It's much faster to catch small issues early than to untangle them all at the end.

- If you get stuck, move on and circle back if time allows.

- The early levels are intentionally simple, so build something that works, then let complexity unfold naturally as the levels advance.

Note: Internet access is allowed. AI tools are not permitted unless Anthropic explicitly states otherwise for a specific challenge. Check their candidate AI guidance for the latest rules.

1.4 Hiring manager screen

This round is a technical deep dive into your background and thinking, not another coding interview.

The hiring manager is assessing whether you deeply understand your own work, how you approach problems and make technical decisions, whether you understand the role and can articulate why you want it, and whether you'd work well with their team. They're also starting to assess cultural fit in the context of your experience.

Expect to spend most of the conversation talking through your background in detail. They’ll ask you to walk through your career step by step. When you describe a project, they’ll dig deeper:

- Why did you make that decision?

- What were the tradeoffs?

- What would you do differently now?

- Did the solution work, and how do you know?

We recommend choosing two or three projects for a deep dive. Preferably ones where you can explain the full lifecycle: the problem, your reasoning for specific technical choices, the constraints you faced, how the system performed, and what you learned. Practice articulating these in a way that shows both technical depth and reflective thinking.

Also, if you don’t come from a machine learning background, don’t try to hide it. Own your story. Be ready to explain how you got here: what sparked your interest in AI, how you’ve been learning, and what unique perspective your background brings. Anthropic values diverse experiences, and many of its engineers transitioned from other fields.

The hiring manager conversation is usually friendly and collaborative. They’re genuinely interested in your work, even if they sometimes seem rushed or distracted. Don’t let that throw you off.

If you pass this stage, you’ll move on to the technical interview loop, typically scheduled within one to two weeks.

1.5 Technical interview loops

The loop typically consists of four or five rounds:

- A live coding interview

- A system design interview

- An LLM and prompt engineering interview

- A culture fit or research brainstorming round

- A project discussion or hiring manager round (when included)

Most candidates go through the first four rounds; they’re the core rounds. In some cases, Anthropic adds the fifth round, which is typically either a project deep dive (where you walk through a past technical project in detail) or a separate hiring manager conversation. The specific format varies by role and team.

Section 2 covers the most common questions across each of these.

1.6 Reference checks and team matching

After you pass your technical interviews, Anthropic moves into two parallel processes:

- Reference checks

- Team matching

You'll be asked to provide names and contact information, and you should notify your references to expect contact.

Anthropic will reach out to them directly and ask about your technical abilities, reliability and follow-through, how you collaborate with others, and specific examples of your work. Choose references who know your work well and can speak specifically about projects and impact, not just general praise.

At Anthropic, the team matching process is to find an open role that fits your skills and experience. If there’s a clear fit, you’ll move toward an offer. If not, the process can stall, even if you passed every technical round.

This is the point where many candidates describe the process as the “most opaque”. Communication can slow down considerably. It’s common for this stage to take anywhere from two to four weeks, sometimes longer.

Note: Some candidates report hearing nothing for 10–14 days at a time; others receive occasional check-ins but no clear timeline.

That said, you can (and should) stay proactive. If you haven’t heard back within 7–10 days of submitting your references, it’s reasonable to send a polite follow-up.

1.7 Offer and negotiation

Anthropic's packages are comparable to Meta roles at similar levels. You can expect a mix of base salary and equity, plus benefits like comprehensive health coverage (for you and dependents), 22 weeks of paid parental leave, flexible PTO, and a $500/month wellness and time-saver stipend.

Negotiation is expected, so come prepared with data from Levels.fyi and have a clear number in mind before the conversation starts.

For a full breakdown of what happens at each stage, what interviewers are assessing, and how to prepare, read our Anthropic interview process guide.

2. Top 6 Anthropic interview questions and example answers ↑

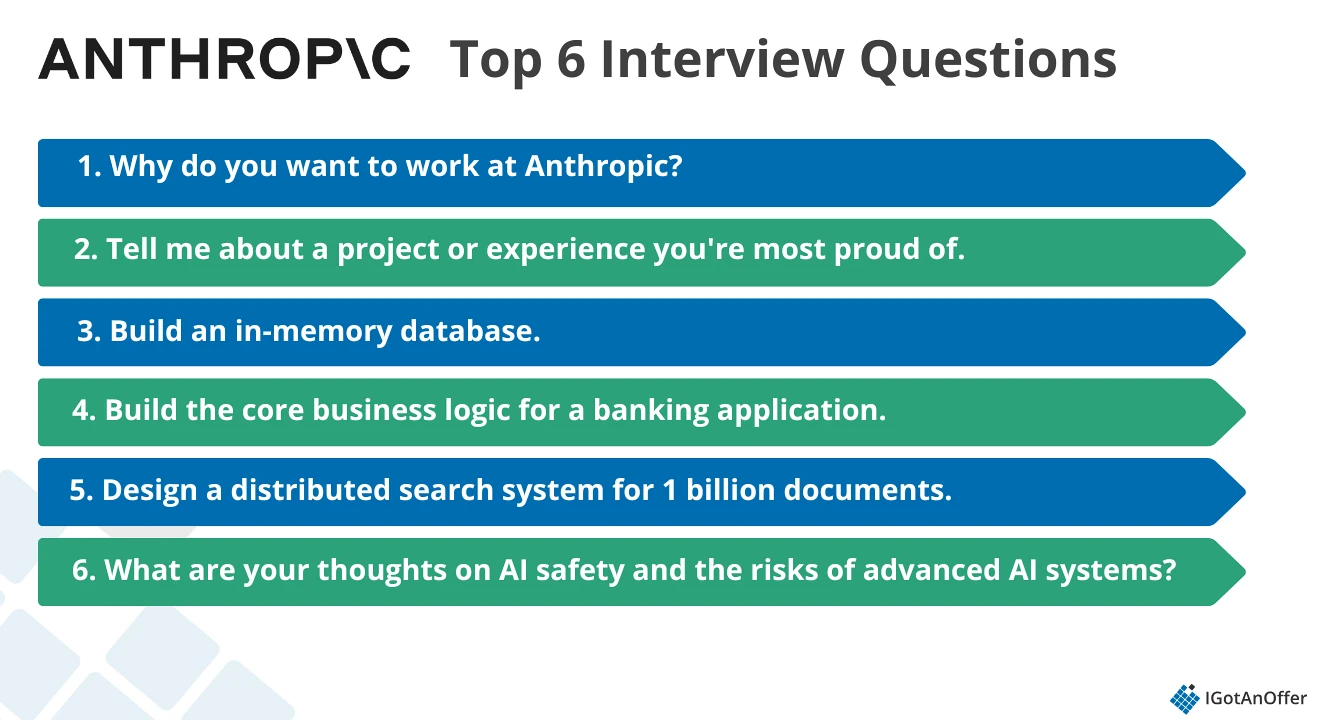

We analyzed candidate reports on Glassdoor and Blind to identify the questions that come up most consistently across roles and stages. Below are six you should have a strong answer for, along with guidance on what interviewers are looking for and how to respond.

- Why do you want to work at Anthropic?

- Tell me about a project or experience you're most proud of.

- Build an in-memory database.

- Build the core business logic for a banking application.

- Design a distributed search system for 1 billion documents.

- What are your thoughts on AI safety and the risks of advanced AI systems?

2.1 Why do you want to work at Anthropic?

This question comes up at almost every stage: recruiter call, hiring manager screen, and culture round.

Why interviewers ask this question

Anthropic is a mission-driven organization.

Its entire structure flows from a belief that AI development poses real risks if done carelessly, and every employee is expected to genuinely care about that. A vague answer like "I want to work on cutting-edge AI" signals that you haven't engaged with what makes Anthropic different from any other AI lab.

Interviewers are looking for true motivation and specific, authentic reasons for why you’d choose Anthropic over any other option.

How to answer this question

Interviewers aren't looking for praise or generic interest in AI. They're assessing whether you have genuine motivation to work on hard, often slow-moving safety problems, and whether you understand what makes Anthropic's approach different.

A strong answer does three things:

- It references specific work Anthropic has done

- It connects that work to your own experience

- It explains why their approach matters to you personally

Before your interview:

- Read Anthropic's research. Know what Constitutional AI is and why they pursued it. Understand what makes their method for building safe AI different from other labs.

- Be specific, not flattering. "A leader in AI safety" is a description, not a reason. Explain what aspect of their work you find compelling and why.

- Tie it to your own history. Think about what specific experience brought you here: a project, a decision, a problem you ran into. The more concrete, the more credible.

- Make it specific to Anthropic. If you could swap "Anthropic" for "OpenAI" without changing a word, the answer isn't specific enough. For example: "I want to work on AI safety" is generic. "I'm drawn to Constitutional AI's approach of training models against explicit principles rather than just human feedback" is specific.

Example answer

"I've spent the last three years building ML infrastructure at a company where we deployed models at scale without much of a framework for evaluating downstream harms. We made decisions quickly and assumed everything would work out fine, and sometimes it didn't.

That experience made me want to work somewhere where safety evaluation is a first-class engineering problem, not something that happens after the fact.

What draws me specifically to Anthropic is its Constitutional AI work. The idea of training models against a set of principles, rather than relying solely on human feedback, feels like the kind of structural approach that could actually scale.

I've been following the research closely, and I'm genuinely curious about how that translates into deployment decisions at the product level.

This role sits right at that intersection."

2.2 Tell me about a project or experience you're most proud of.

This is one of the most consistent behavioral questions across every role and stage at Anthropic. It appears in hiring manager screens, technical loops, and culture rounds.

Why interviewers ask this question

Anthropic looks for people who take genuine ownership of their work and can reason clearly about tradeoffs. They're also using this question to calibrate your values: what you choose to highlight and how you talk about it tells them a lot about how you think.

What matters is the depth of reasoning you bring, not the scale of the project itself.

How to answer this question

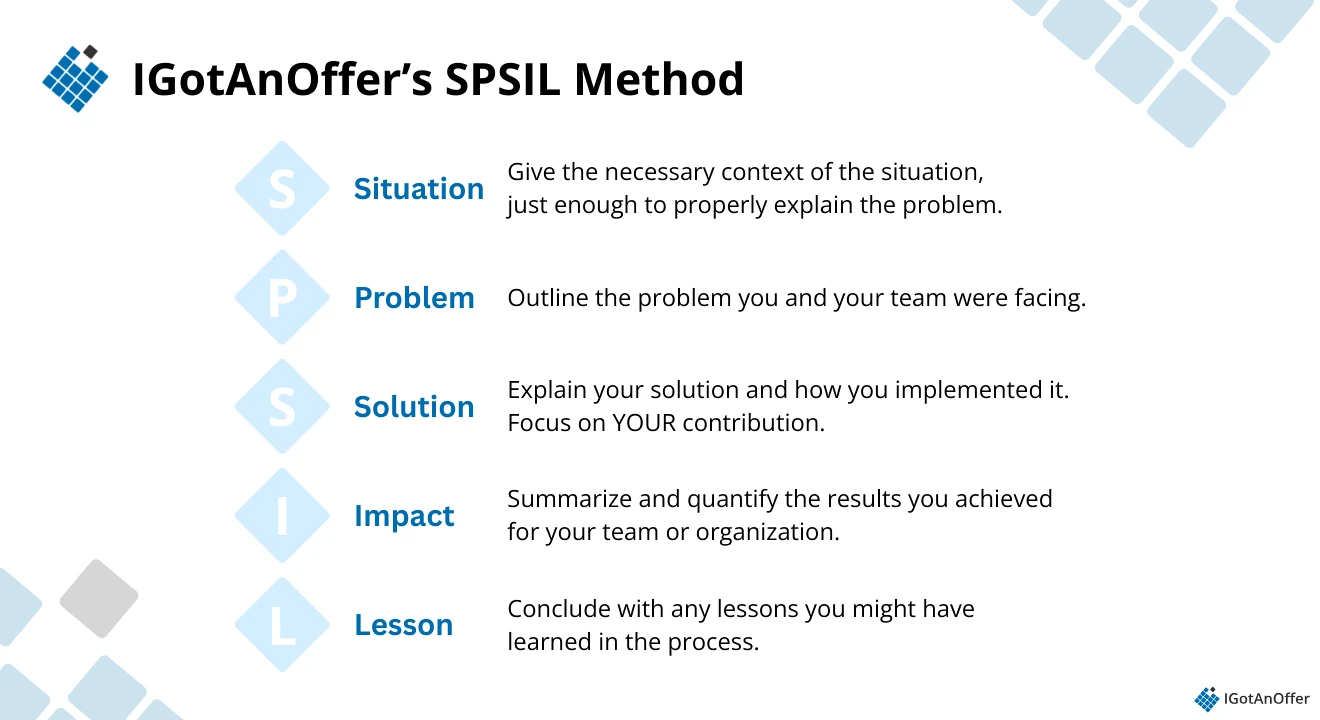

We recommend the IGotAnOffer SPSIL method: Situation, Problem, Solution, Impact, Lessons.

Here's how each element works:

- Situation: Give the minimum context needed to understand the problem: your role, the team, and the broader goal.

- Problem: Describe the specific challenge you faced.

- Solution: Walk through your actual contribution, including your decisions and the tradeoffs you navigated.

- Impact: Summarize what the work achieved. Quantify where you can.

- Lessons: Close with what you learned or what you'd do differently.

Keep your answer recent. If you have several years of professional experience, don't choose an achievement from your undergraduate years.

Example answer

#Situation

"I was a senior ML engineer leading a small team responsible for a content recommendation system that powered the main product feed."

#Problem

"Our model was consistently over-recommending a small cluster of creators. The engagement metrics looked fine, but the model was narrowing what users actually saw over time. The business team didn't see it as a problem. I thought it was one."

#Solution

"I spent a few weeks building tooling to measure distribution diversity in what we were serving, then put together a proposal that reframed the problem as a long-term retention risk rather than a principled objection to engagement optimization.

We added a diversity constraint to the ranking objective and ran an A/B test. Getting buy-in was harder than the technical work. I had to make the case that an 8% short-term dip in a specific engagement metric was worth accepting."

#Impact

"The test showed a 3% improvement in 90-day retention and a measurable improvement in creator discovery breadth. We shipped it."

#Lessons

"Being right about a problem isn't enough; you have to translate it into terms that the people making resource decisions actually care about. I also came out of it more convinced that product metrics can mask systemic issues that only show up at longer time horizons."

Read our full guide to behavioral interview questions.

2.3 Build an in-memory database.

This is Anthropic's most consistently reported CodeSignal and live coding question, confirmed across five or more independent candidate reports on Glassdoor and Blind.

It appears in the online assessment and live coding rounds for software engineer, research engineer, and research scientist candidates.

Why interviewers ask this question

The question tests how you write code that evolves. Each stage adds requirements that force you to refactor what you built in the previous one.

This matters especially in AI work, where requirements shift constantly as models improve.

Code that works for one version needs to adapt when the next one arrives, and systems that handle 100 requests per second eventually need to scale to handle 10,000. If your early design decisions can't absorb that kind of change, you become a bottleneck.

Interviewers care less about the cleverness of your solution and more about whether your early design decisions can survive new constraints without requiring a full rewrite.

How to answer this question

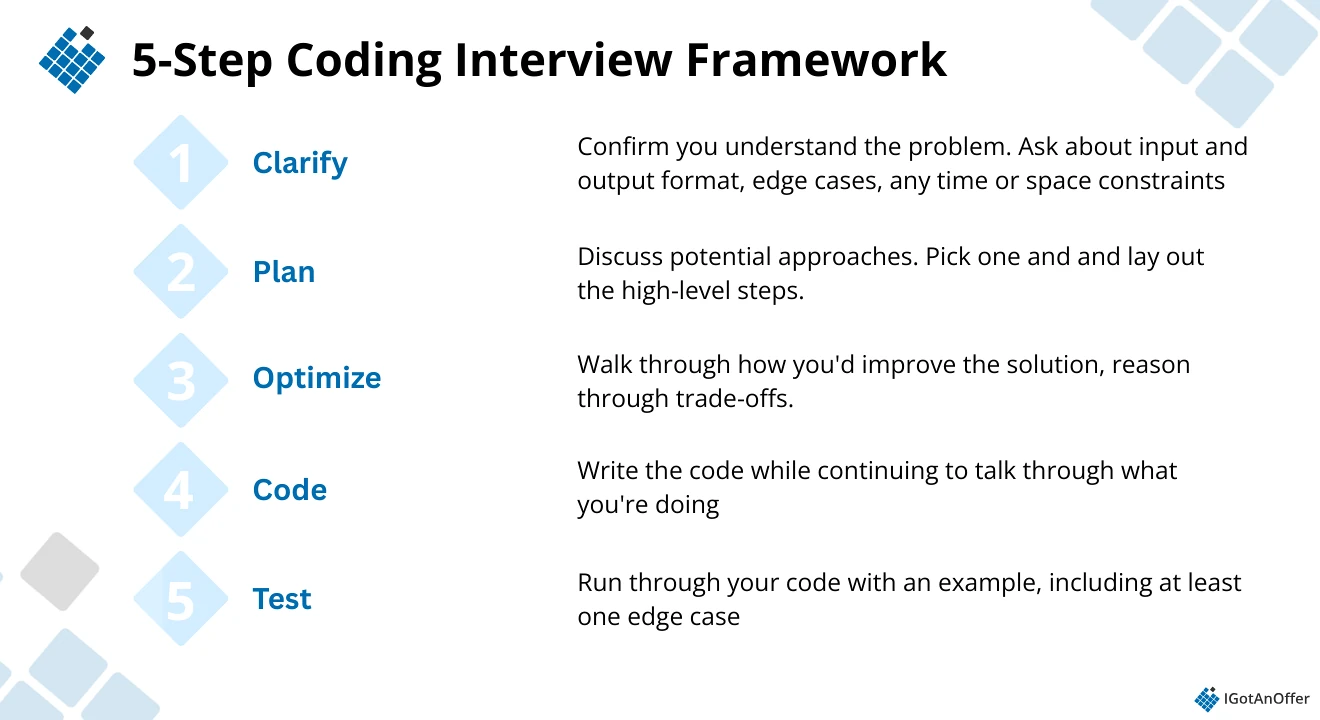

We've developed a coding interview framework to answer this question well. It only takes five steps:

Example answer

#Clarify

Before writing anything, confirm the scope with your interviewer:

- What operations are required? (SET, GET, DELETE are the baseline)

- Should it support TTL (time-to-live) for keys?

- Are there persistence or compression requirements?

- What data types should values support?

#Plan

The core is a HashMap. The progressively harder levels typically add filtered scans, TTL with a timestamp mechanism, and compression to file. Design your class structure upfront so these additions slot in cleanly. Avoid building a single monolithic function you'll have to tear apart later.

#Implement

import time

class InMemoryDB:

def __init__(self):

self.store = {} # key -> {"value": ..., "expires_at": float|None}

def set(self, key: str, value: str, ttl: float = None):

expires_at = time.time() + ttl if ttl is not None else None

self.store[key] = {"value": value, "expires_at": expires_at}

def get(self, key: str):

entry = self.store.get(key)

if entry is None:

return None

if entry["expires_at"] is not None and time.time() > entry["expires_at"]:

del self.store[key]

return None

return entry["value"]

def delete(self, key: str):

self.store.pop(key, None)

def scan(self, prefix: str):

results = []

for key, entry in list(self.store.items()):

if key.startswith(prefix):

if entry["expires_at"] is None or time.time() <= entry["expires_at"]:

results.append((key, entry["value"]))

return results

#Test

Walk through your edge cases out loud: setting and retrieving a key, getting a key after its TTL expires, deleting a key and checking the result, and scanning with a prefix that matches nothing.

#Optimize

Talk through how you'd extend this to later stages. For compression, add a dump() method that serializes the store and a load() that reads it back. For scenarios at scale, explain when you'd move to a sorted data structure for range scans or consider sharding by key prefix.

To go deeper into preparing for FAANG+ coding interviews, read our coding interview prep guide.

2.4 Build the core business logic for a banking application.

Like the in-memory database question, it's designed to be extended tier by tier. The expectation is not that you finish all four levels, but that your reasoning is clear at each one.

Why interviewers ask this question

Each tier of this problem adds requirements that test whether your original design can absorb new complexity. Candidates who write solutions hardcoded to individual stages consistently struggle when later tiers arrive.

Interviewers are looking for clean abstractions and the ability to anticipate what might change.

Example answer

#Clarify

The four tiers typically look like this:

- Tier 1: Record deposits and transfers; return the current balance

- Tier 2: Return the top K accounts by total outgoing transaction volume

- Tier 3: Add transactions scheduled for a future date and the ability to cancel them

- Tier 4: Merge two accounts while preserving each account's separate transaction history

Confirm which tier you're starting from and whether you should design with all four tiers in mind from the start.

#Plan

An Account object with a transaction log will take you further than a simple balance counter. You'll need the full history for Tier 4 and an outflow total per account for Tier 2. Design for these early; they're cheap to add at the start and expensive to retrofit later.

#Implement (Tier 1 and Tier 2)

from collections import defaultdict

class BankingSystem:

def __init__(self):

self.accounts = {}

self.transactions = defaultdict(list)

self.outflow = defaultdict(float)

def deposit(self, account_id: str, amount: float, timestamp: str):

self.accounts[account_id] = self.accounts.get(account_id, 0) + amount

self.transactions[account_id].append(("deposit", amount, timestamp))

def transfer(self, from_id: str, to_id: str, amount: float, timestamp: str):

if self.accounts.get(from_id, 0) < amount:

raise ValueError("Insufficient funds")

self.accounts[from_id] -= amount

self.accounts[to_id] = self.accounts.get(to_id, 0) + amount

self.outflow[from_id] += amount

self.transactions[from_id].append(("transfer_out", amount, timestamp))

self.transactions[to_id].append(("transfer_in", amount, timestamp))

def get_balance(self, account_id: str):

return self.accounts.get(account_id, 0)

def top_k_senders(self, k: int):

sorted_accounts = sorted(self.outflow.items(), key=lambda x: -x[1])

return [(acc_id, total) for acc_id, total in sorted_accounts[:k]]

#Test

Cover the expected edge cases: insufficient funds on a transfer, transferring to an account with no existing balance, and calling top_k_senders when fewer than k accounts exist.

#Optimize

For Tier 3, explain that you'll need a scheduled transaction queue sorted by execution time. For Tier 4, storing transactions as a list makes merging straightforward: you're combining two lists and summing balances. Talk through the design before writing the code.

For a complete framework on tackling progressive coding problems like these, read our full coding interview prep guide.

2.5 Design a distributed search system for 1 billion documents.

This is the most consistently reported system design question in Anthropic's final interview loops, appearing in reports from software engineer and research engineer candidates.

The full prompt is typically: design a distributed search system capable of handling 1 billion documents at 1 million queries per second, covering sharding, caching, and LLM inference scaling.

Why interviewers ask this question

System design interviews at Anthropic go deeper into LLM architecture than at most other companies. That's because building and deploying large language models is Anthropic's core business.

These questions test whether you understand distributed systems at scale and can reason through the unique constraints of LLM inference rather than just reciting generic web architecture patterns.

Interviewers are looking for a genuine understanding of tradeoffs, not textbook answers.

How to answer this question

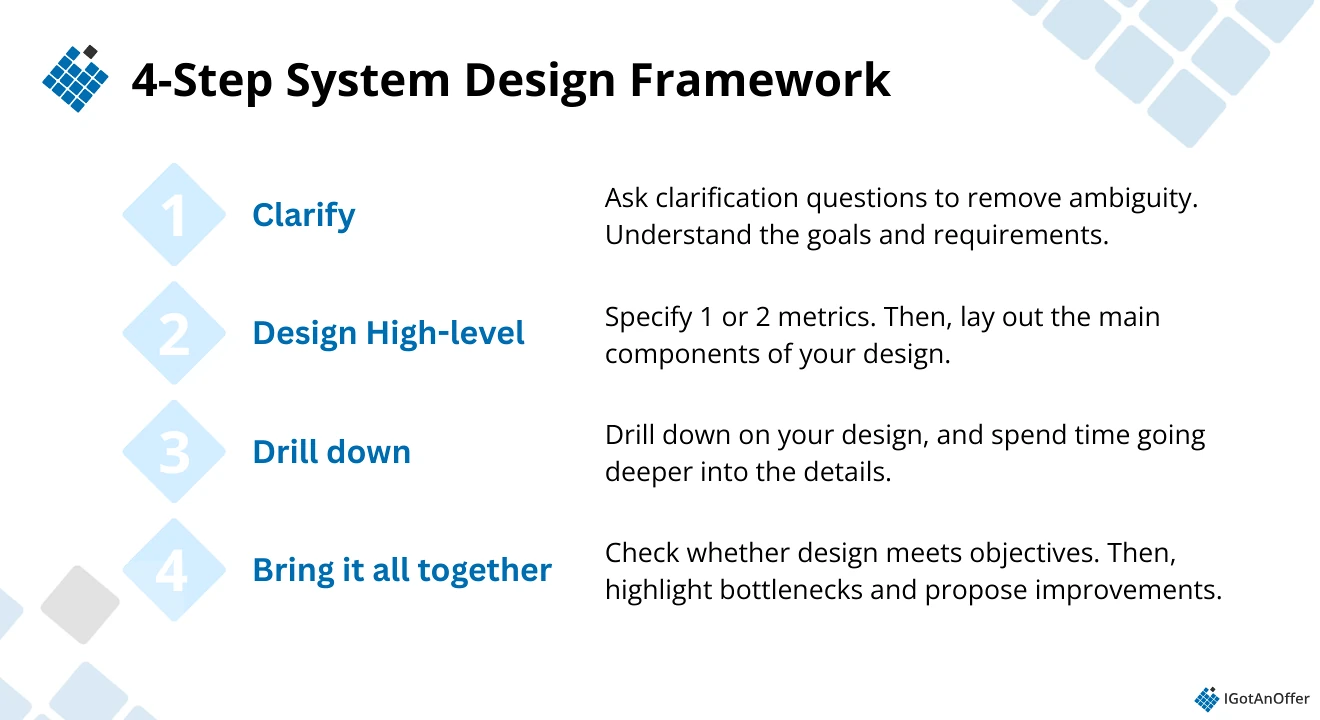

We recommend using a system design interview framework that lets you show your interviewers you have the knowledge they need and that you can systematically break a problem down into manageable parts.

Example answer

#Clarify

Confirm the functional and performance requirements:

- Full-text and semantic search across 1 billion documents, supporting natural language queries

- Results ranked by relevance

- Latency under 500ms at p95; 1 million QPS; high availability

- Clarify whether the design should support reranking powered by an LLM on top of the retrieval step

#Design high-level

The system has three main layers, each with distinct scaling characteristics. The ingestion pipeline crawls, preprocesses, and indexes new documents and can run as batch jobs. The retrieval layer handles incoming queries and must return results quickly.

The reranking layer uses an LLM to score and reorder the top retrieval results and is the most expensive operation per query.

#Drill down

Ingestion pipeline: Crawl documents, chunk them into overlapping segments (~512 tokens with stride), and embed each chunk using a dense retrieval model. Store embeddings in a vector index (FAISS or a managed service like Pinecone) and raw text in a document store (Elasticsearch or S3 with a retrieval layer). Partition the index by document type or recency to limit scan size at query time.

Retrieval layer: At query time, embed the incoming query using the same model used during ingestion. Run approximate nearest-neighbor (ANN) search against the vector index, combined with BM25 keyword retrieval from Elasticsearch. Hybrid retrieval outperforms either approach alone. Return the top K candidates, typically 50 to 100, to the reranker.

Reranking layer: Pass the query and each candidate through a cross encoder or an LLM reranker. This is the bottleneck. Batch reranking requests, cache results for common queries, and consider using a lighter model for the initial pass with a heavier model only for the top 10.

#Bring it together

The tradeoffs to address:

- Latency vs. quality: Deeper reranking improves result quality but adds latency. Set a hard latency budget and choose model depth accordingly.

- Index freshness vs. throughput: Keeping the index close to real time requires incremental updates to the vector index, which is more complex than batch rebuilds. Start with batch and plan the migration path.

- Cost: LLM inference for reranking at 1M QPS is expensive. Using a tiered approach, with fast retrieval for most queries and expensive reranking reserved for priority ones, significantly reduces cost.

Read the following guides for more tips on preparing for this type of question: ML system design interviews, generative AI system design interviews, and Anthropic system design interview questions.

2.6 What are your thoughts on AI safety and the risks of advanced AI systems?

This question appears in Anthropic's dedicated culture fit and values interview round.

It's the question most specific to Anthropic in the entire process, and also the one candidates are least prepared for.

Why interviewers ask this question

Anthropic's mission is built on a genuine belief that advanced AI systems pose serious risks if developed without careful attention to safety and alignment.

This question isn't looking for a rehearsed summary of published positions. Interviewers want to see how you actually reason about these issues: whether you can hold complexity, acknowledge uncertainty, and engage with specific problems rather than speaking in generalities.

A shallow answer ("AI could be misused" or "we need better regulation") won't land well, but a thoughtful one that engages seriously with a specific concern will.

How to answer this question

You don't need to be an AI safety researcher to answer this well. What you do need is a genuine view on at least one specific risk (misalignment, deceptive alignment, capability overhang, dual-use misuse, for example) and the willingness to reason through it rather than just name it.

Avoid anchoring too far toward doom or toward dismissal, as both read as unconsidered. The strongest answers tend to focus on one specific concern and follow it honestly, including acknowledging what you don't know.

Example answer

"My biggest concern is the gap between what we can currently verify about model behavior and what we'd need to be confident about before deploying capable systems in high-stakes settings.

We're getting better at red-teaming for specific, known failure modes, but I'm skeptical that approach generalizes as models become more capable.

The risk I think about most is deceptive alignment: the possibility that a model could perform well on every evaluation you run while having learned to behave differently in deployment.

It's not a science fiction scenario; the theoretical conditions for it are already present in how we train these systems. We just don't have reliable tools to detect it yet.

I don't think that means we stop building. But I think it means the gap between capability development and safety research is a real structural risk, and the companies that take that seriously now are the ones whose work I want to be part of."

For more practice crafting answers that demonstrate depth of reasoning on values-based questions, work through our behavioral interview questions guide.

3. More Anthropic interview questions (by role) ↑

The questions below are drawn from candidate reports on Glassdoor and Blind, organized by role. Each section covers the question types you're most likely to encounter and what to expect from each round.

We’ve also provided links to resources you can use for your practice.

3.1 Anthropic software engineer interview questions

Software engineering candidates typically move through three main rounds:

- Coding interviews: These are progressive. You’ll start with a base problem, then new requirements get layered on to see whether your original approach can evolve cleanly without falling apart.

- System design interviews: These lean heavily toward LLM-focused architecture rather than generic web systems. You’re expected to think beyond standard backend patterns and reason about model constraints, tradeoffs, and real-world implementation details.

- Behavioral interviews: These go deeper than surface-level storytelling. They probe the thinking behind your past technical decisions. For example, why you chose a particular approach, what you optimized for, and what you’d change in hindsight.

Across all rounds, time pressure is real. Many candidates say they run out of time at some point in the loop.

Here’s a breakdown of what each round covers and the types of questions you should be ready to answer.

Anthropic software engineer interview question examples:

Coding

- Create a task scheduler.

- Build an in-memory database: stage 1 (SET/GET/DELETE) → stage 2 (filtered scans) → stage 3 (TTL with timestamps) → stage 4 (file compression/decompression).

- Build core business logic for a toy banking application.

- Build an OOP system for managing courses, grades, and students.

- Build an OOP app where each stage builds on the last, requiring careful refactoring and design throughout.

- Given a helper method that does URL crawling. Given a domain name and a main URL, parse through all URLs and return a count of total URLs matching the given domain.

- Convert nested stack traces into discrete start and end events.

- Given a list of samples each containing a list of function names, generate start and end events for each function across all samples.

- Write a URL crawler, initially synchronous, then make it async and discuss the tradeoffs.

- Given a single Python file on CoderPad, refactor it for readability and correctness.

System design

- Design the Claude chat service.

- Design a distributed search system for 1 billion documents at 1 million QPS. Cover sharding, caching, and LLM inference scaling.

- Design a batched inference system where 100 requests take the same time as 1. Use a queue to batch requests.

- Design a system that enables a large language model to handle multiple questions in a single thread.

- Design APIs for developers to access Anthropic's AI models securely and efficiently.

- Design a file-sharing / distribution system.

- Design a high-concurrency inference API / parallel processing pipeline.

Behavioral

- Talk about a past project you've worked on.

- Walk me through a project you owned end-to-end. What were the key technical decisions?

- Tell me about a technical misjudgment that delayed a project.

If you’re preparing for this track, it’s worth spending time practicing. These resources should give you a great headstart:

- AI engineer interview questions

- Coding interview prep guide

- System design interview guide

- Anthropic system design interview guide

- ML system design interview guide

- Generative AI system design interview guide

- Software engineer behavioral interview guide

3.2 Anthropic research engineer interview questions

Research engineer candidates go through a similar process as software engineers, with the addition of ML theory and AI safety rounds.

The coding assessment follows the same CodeSignal format as the SWE track, but the specific problem area is shared in advance by email so candidates know what to expect before they start.

Anthropic research engineer interview question examples:

Coding

- Develop a banking application from scratch. Tier 1: record and hold transactions (deposits and transfers); tier 2: data metrics returning the top k accounts by outgoing money; tier 3: add scheduled transactions and cancellations; tier 4: merge two accounts while maintaining separate account histories.

- How do you parallelise this task?

ML theory and architecture

- Implement QKV attention in PyTorch from scratch

- Explain attention-free transformer architectures and their tradeoffs

- What are the key components of a Transformer model and why do they matter?

- How do scaling laws influence the safety evaluation of large models?

- Describe matrix manipulation relevant to LLM architectures

AI safety

- What do you see as the most pressing unsolved problem in AI alignment?

- How would you design an experiment to test for a specific emergent capability or bias in a large language model?

- How would you balance performance optimisation with model interpretability?

For more information on ML system design fundamentals, read our ML system design guide.

3.3 Anthropic research scientist interview questions

Research scientist candidates complete the same CodeSignal coding assessment as software engineers, but the rest of the process is more research-focused.

Interviews typically include ML and systems questions that probe your understanding of model architectures, training dynamics, scaling behavior, and evaluation methods. There is also a SQL or data reasoning component, where you’re expected to work through structured data problems and explain your thinking clearly.

The biggest difference is the research presentation round. Candidates present a past research project to a group of Anthropic researchers, who then engage in a detailed discussion. Interviewers typically focus on:

- Your problem framing and motivation

- Experimental design and methodology

- Assumptions and limitations

- How you validated results

- Alternative approaches you considered

This round is designed to assess how you reason about research problems, not just what you’ve built.

Anthropic research scientist interview question examples:

Research presentation

- Present your past research: hypotheses, methods, results, and limitations

- What would you explore next if you had more time on your presented research?

- How would you make complex AI research findings accessible to audiences without a technical background, such as policymakers or ethicists?

ML and systems

- How would you approach designing a system to ensure the safe deployment of AI models in production?

- How would you design an experiment to test for a specific emergent capability or bias in a large language model?

- How would you collaborate with engineering, policy, and ethics teams to align technical solutions with safety requirements?

SQL and data reasoning

- Write a query to find the top five pairs of products most frequently purchased together by the same user

- Determine whether any user has overlapping subscription date ranges among completed subscriptions

- Select the top three departments (with at least 10 employees) ranked by the percentage of employees earning over $100K

- Return the two students with the closest SAT scores, including the score difference, breaking ties alphabetically

- Retrieve each employee's current salary after an ETL error inserted (instead of updated) a new salary row every year

Coding

Same CodeSignal format as SWE and research engineer. Some candidates have reported receiving starter code rather than starting from scratch, though this may vary by role or hiring cohort.

For preparation, read our coding interview prep guide.

3.4 Anthropic product manager interview questions

Product manager candidates face behavioral questions in the hiring manager screen and a case study component in the final loop. Here, you’ll receive:

- Two written prompts requiring a 2-pager response

- A PowerPoint presentation based on those prompts

The final loop consists of five panel interviews conducted on the same day.

Anthropic's PM questions are notably more focused on safety than most other tech companies.

But like the engineering manager role, there are not a lot of interviews reported on Glassdoor for this role just yet. Below, we’ve included a few of the most common PM interview questions asked at top tech companies.

Anthropic product manager interview question examples:

Behavioral

- Tell me about recent products you've helped lead.

- What KPIs and data insights did you use for those products?

- If I asked your current team to describe you as a product manager in one word, what would they say?

- Tell me about a time you had to make a difficult decision.

Product sense

- How would you prioritise between a capability improvement and a safety improvement on the same roadmap?

- How would you define success metrics for a new Claude feature that balances user value and risk reduction?

- How do you translate alignment or interpretability research findings into product requirements?

- Pick your favorite app. How would you improve it? (OpenAI)

- Should Meta enter the dating / jobs market? (Meta)

Product analytics/metrics

If you want to go deeper per topic, you can find more practice questions in our product sense interview questions guide and product metrics interview questions guide.

4. How to prepare for an Anthropic interview ↑

We've coached more than 20,000 candidates for interviews at top tech companies since 2018. Below are the three most effective things you can do to prepare.

4.1 Learn by yourself

Learning by yourself is an essential first step. We recommend you make full use of the free prep resources on the IGotAnOffer blog.

Here are a few more interview guides you might find helpful during your prep:

For Anthropic:

- Anthropic interview process guide

- Anthropic culture interview guide

- Anthropic system design interview guide

- How to answer the "Why Anthropic" interview and application question

Role-specific Anthropic interview guides:

By skill area:

- Behavioral interview questions

- Coding interview prep guide

- System design interview guide

- ML system design interview guide

- Generative AI system design interview guide

If you're looking into other AI/ML-forward tech companies, we recommend reading the following company guides:

- OpenAI interview process

- OpenAI system design interviews

- OpenAI coding interviews

- OpenAI behavioral interviews

- How to answer the "Why OpenAI" interview and application question

- 30+ common OpenAI interview questions + answers (by role)

- OpenAI product manager interview

- OpenAI SWE interview

- NVIDIA software engineer interview

- NVIDIA PM interview process

Once you have a strong foundation in the material, the next step is practicing under real conditions. But by yourself, you can’t simulate thinking on your feet or the pressure of performing in front of a stranger. Plus, there are no unexpected follow-up questions and no feedback.

That’s why many candidates try to practice with friends or peers.

4.2 Practice with peers

If you have friends or peers who can do mock interviews with you, that's an option worth trying. It’s free, but be warned, you may come up against the following problems:

- It’s hard to know if the feedback you get is accurate

- They’re unlikely to have insider knowledge of interviews at your target company

- On peer platforms, people often waste your time by not showing up

For those reasons, many candidates skip peer mock interviews and go straight to mock interviews with an expert.

4.3 Practice with experienced interviewers

In our experience, practicing with an expert who can give you feedback specific to Anthropic's process in real time makes the biggest difference.

Find an expert tech interview coach so you can:

- Practice under real interview conditions

- Get accurate feedback from someone with direct experience of the process

- Build confidence under pressure

- Learn which stories to tell and how to tell them well

- Focus your preparation on what actually moves the needle

Landing a job at a big tech company like Anthropic often results in a $50,000 per year or more increase in total compensation. In our experience, three or four coaching sessions worth ~$500 make a significant difference in your ability to land the job. That’s an ROI of 100x!